DocChat

Tech Stack

Role

Full-stack Developer

Team Size

1

Duration

February 2026 - Ongoing

Project Overview

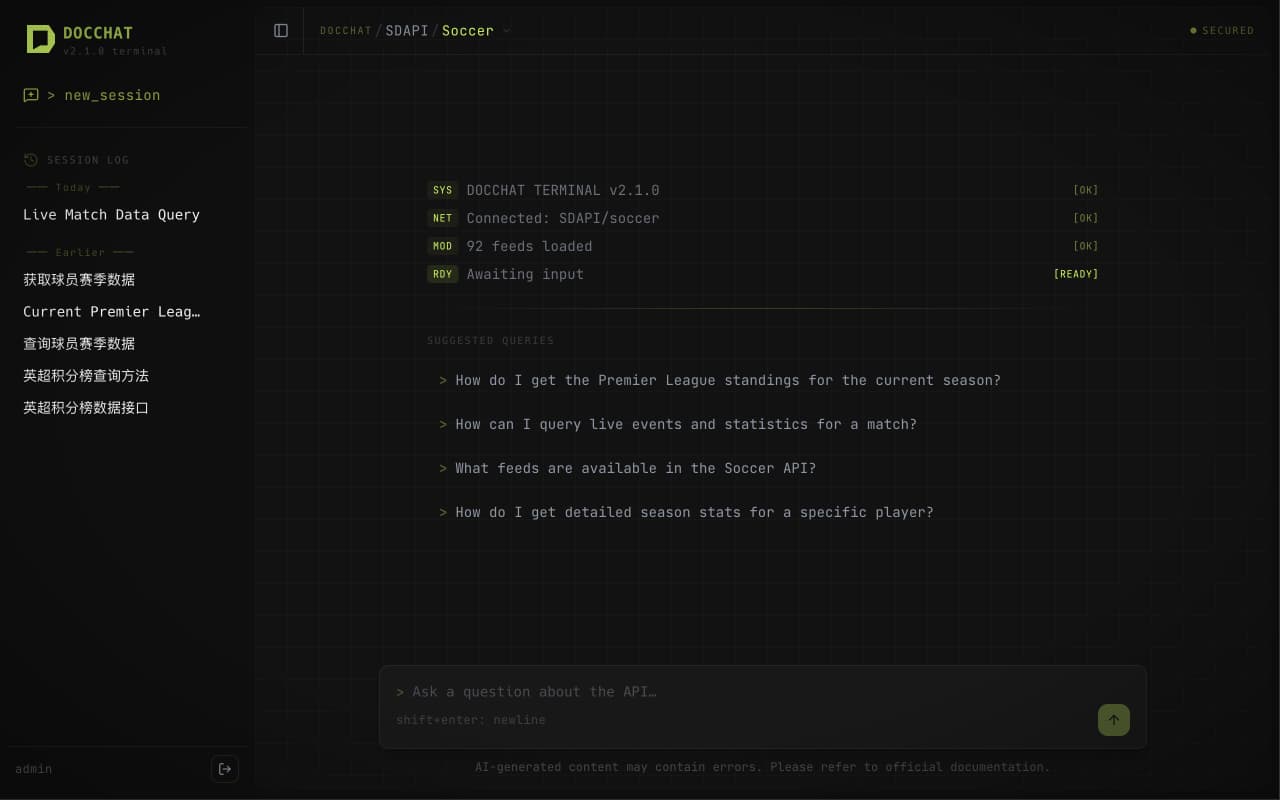

DocChat is an AI-powered API documentation assistant designed to help developers locate endpoints, understand parameters, and explore data structures through natural language. The system uses a deterministic-first + LLM-fallback two-phase routing architecture — ~80% of queries are resolved without any LLM calls, significantly reducing costs. Currently launched with Opta/Stats Perform sports data APIs as the first use case, supporting Motorsport (7 feeds) and Soccer (92 feeds) with a 4-layer knowledge injection system for precise answers. The long-term vision is a general-purpose API documentation platform where users can upload any OpenAPI spec and auto-generate knowledge bases. The frontend features a terminal-style dark theme (neon green + Matrix rain background), SSE streaming conversations, session history management, and user authentication.

Highlights

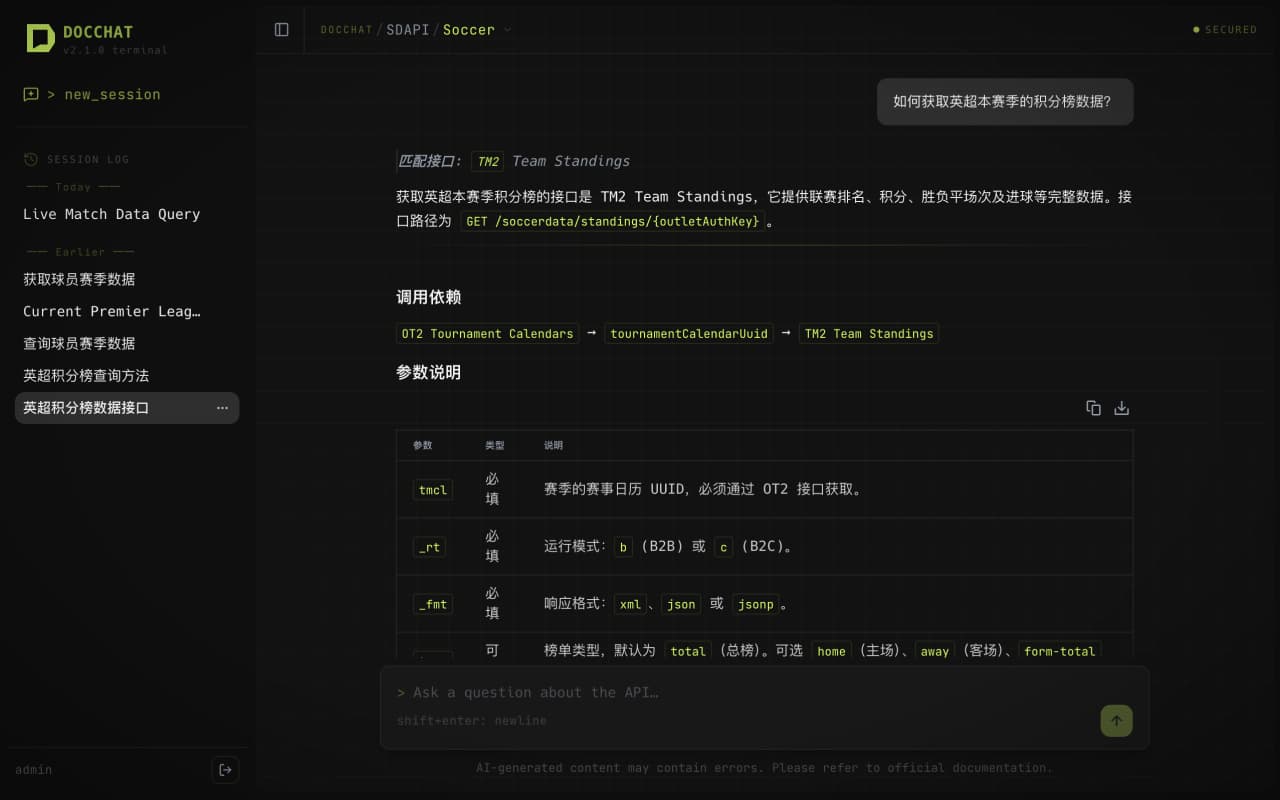

- Two-phase routing — deterministic keyword matching first (~2650 triggers), LLM fallback, ~80% queries with zero LLM calls

- 4-layer knowledge injection — endpoint-level docs → domain overview → shared knowledge base → dynamic topic routing (INDEX.yaml driven)

- Current use case: Opta sports data API — 99 endpoints covered (Motorsport 7 + Soccer 92), each with full knowledge files

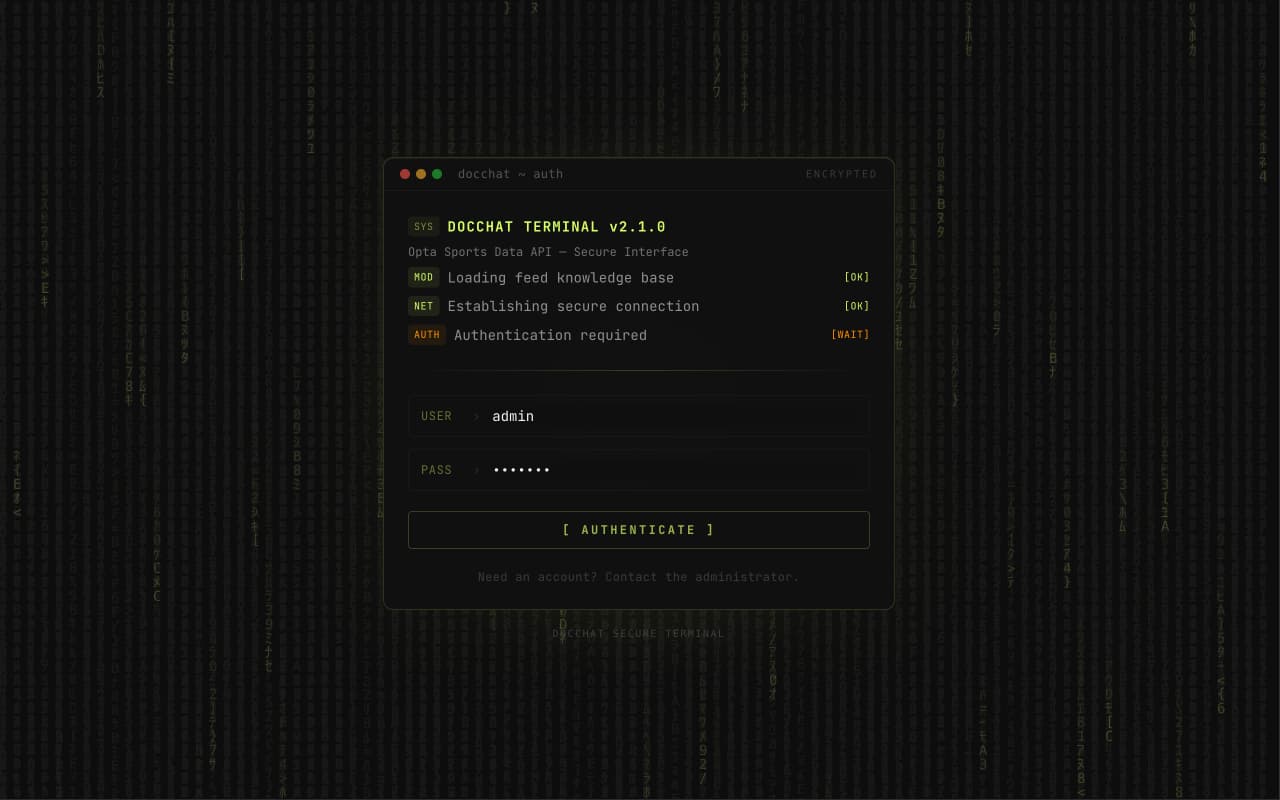

- Terminal-style UI — neon green (#DCFF71) + Matrix rain background, Hack font, glassmorphism input

- SSE streaming chat + Streamdown Markdown rendering + syntax highlighting + bilingual (EN/CN) support

- Full user system — JWT auth (Access + Refresh tokens), conversation history, auto-generated session titles

Challenges

- Knowledge organization and precise routing across large-scale API endpoints, preventing LLM hallucination in massive documentation

- Data fusion from three sources: OpenAPI specs + PDF documentation + hand-written knowledge files

- SSE streaming communication with static-exported frontend (output: export)

- Docker containerized deployment with PostgreSQL network topology configuration

Solutions

- Built enhanced index JSON (Swagger parsing + PDF extraction + metadata injection), loaded into memory at startup

- Designed META.yaml with triggers + fields + scenarios for multi-dimensional matching, covering the vast majority of queries deterministically

- Vercel AI SDK v6 + TextStreamChatTransport for frontend SSE consumption

- Docker Compose with external network sharing PostgreSQL container, Nginx reverse proxy + Let's Encrypt HTTPS

Matrix rain background + terminal emulator-style secure authentication interface

Soccer API with 92 feeds loaded, sidebar session history, suggested query prompts

Natural language query → precise TM2 endpoint match with structured parameter docs and dependency chain